Authors:

Matthew Beane – UCSB Technology Management Program

Interviewers:

Dainelle Bovenberg – UCSB Technology Management Program

Mayur P. Joshi – Ivey Business School

Article link: https://doi.org/10.1177/0001839217751692

Part A: Formal Interview

1. While the primary focus of the paper is on trajectories of learning in communities of practice, a second theme concerns the management of risk and error in this new form of surgery. Since learning requires error, the management of risk and learning seem to be intertwined. How do you think about this?

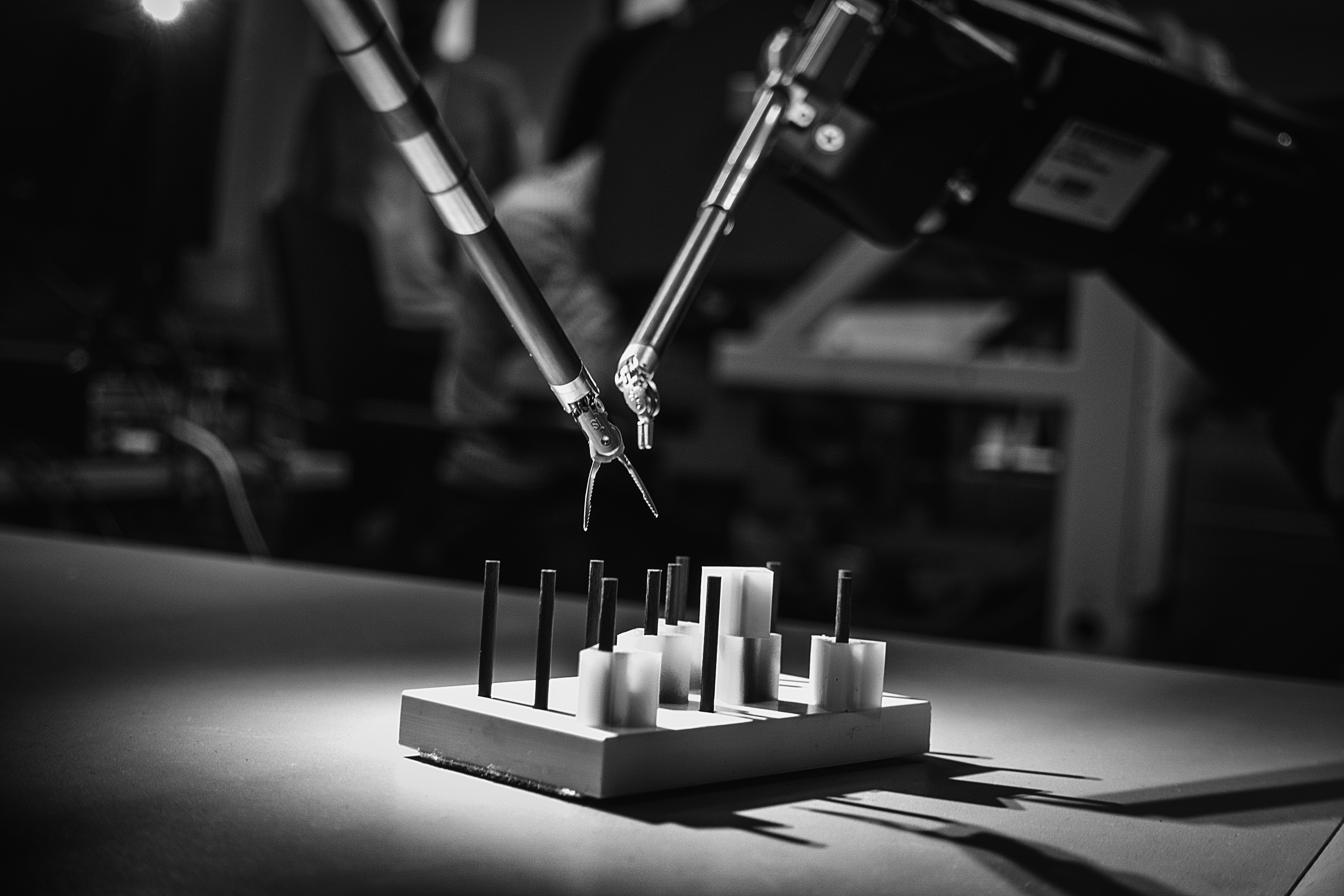

Thanks for this question — very interesting territory to think about more deeply. In a number of places in the paper, those who succeeded at learning were seeking work that challenged them. That is not the same as risk, though. I think my case suggests that the kind of risk that matters for learning is more socially constructed than it is a matter of any “objective” characteristics of the work, and therefore it’s more a matter of observability, perception and power. A classic example of this is Bosk’s (1979) “quasi-normative errors” where a resident could learn a best practice from one physician, only to discover that the next physician in their rotation on the very same unit with the very same patients treated that practice as wildly inappropriate. And so, from that angle, working with the robot increased “risk” in that it made the resident’s surgical actions much more observable and obviously “theirs” than in open surgery.

2. What are your thoughts on the risks involved for the organizations using robotic surgery, and how might you extend these ideas to risks involved in robotics in the workplace more generally?

Your definition of risk is very consequential for how you answer that question. Because the assessment of risk differs depending on your perspective. For the CFO of a hospital, their assessment of risk focuses on the fiscal health of that institution, including matters of the law— malpractice suits and so on— and cost of finding, purchasing, maintaining and running these kinds of systems as contrasted with open surgery. Then for the COO, their idea of risk would have to do with matters of efficiency and quality. And then of course there’s the person who cares about that institution’s brand, market share, and reputation — on the one hand, as being a “forward thinking early adopter innovative”-type institution versus one that is focused on patient safety and quality on the other hand. You can see that in this question of risk there’s a very small part of it that has to do with this focal act of “is it safe if I go do X,” and “do” is resecting or suturing tissue.

And I think this gets to your broader question. The more complex the technology, the less obvious, the more distributed, the more complex questions of risk become. The technology is complex in materiality terms: there are lots of interchangeable parts, lots of components, they are quite interdependent, they are computationally controlled, they collect data on their operation and so on. But it’s also complex from a business model perspective. Essentially, you’re buying a Gillette razor kind of technology: Da Vinci makes most of their money over time on the disposable instruments that click into the thing, the sterile drapes that go over it and the service contract. All of that introduces a lot more uncertainty and complexity into the situation in terms of risk. I don’t have a definitive view that intelligent technologies— this is my label for things like robotics and AI these days — are in some way categorically distinct from other technologies in this regard. But this system is — having watched an organization, a field, a team and individuals manage this technology to try to get work done— far more complicated than getting it done with sterilizable steel tools. Maybe less risky, but maybe not! We’ve always, for the sake of analysis, bracketed the phenomena that we studied. And it is harder and harder to justify that now, is all I would say. Framed positively, if you’re focused on a particular technology, I think it’s incumbent on you to understand the implications of that complexity for risk.

3. How did you design the study, which uniquely combines comparative ethnographic study with blinded interviews, both at multiple sites? Specifically, what was the rationale behind the blinded interviews?

The first most important thing to say is, I had exceptional mentorship. The one thing that I think I do, maybe to a fault, is to seek compelling phenomena and to gather as much data as I can. Originally I did actually focus on training and learning in my IRB [Institutional Review Board] proposal: how one learns given this new technology. But I had all of these sites, and without my dissertation committee, I would have really been lost. They were an exceptional set of guides. For example, in John Van Maanen I had someone who at base was utterly insistent on living with, living like, and being insatiably semi-objective and curious. My demeanor was always very much sort of chipper, open, curious and yet quite insistent, asking questions and pushing for access to data.

Wanda Orlikowski inspired me to turn that curiosity equally towards artifacts and practices. I’ve always been very into technology and curious about technology, but now I began to interrogate it with equal intensity: how does that arm work? Where does the code live? What kind of cable is that? And how does it connect to there? And how was this set up and used originally, and how is it done now? Those kinds of things. I think that was critical, really. And with Kate Kellogg, I distinctly remember a conversation with her where I said, “You know, I’m now going to go try to find more shadow learners” — I had that phrase at some point early on, or these “cheating learners”. I told her I was going to go try to find these people and I swear she just casually said (she doesn’t remember saying this) something like, “It would be great if you could interview them without knowing whether they were or weren’t.” And I had learned to trust her, so I took a week and thought, “Well, how would I do that?” And I knew about snowball sampling methods and I knew about placebo controlled trials. So this is the best that I could come up with, and I came back to my committee having executed on it and they said, “Well that’s fantastic,” and Kate said, “where did you come up with that idea?” I think I laughed out loud! Attribution aside I dropped a lot of potential data collection opportunities to focus on these new targets.

4. While intelligent technologies may pose challenges for professionals in learning their craft, these technologies may also pose challenges for researchers to understand the complexities of the phenomenon. How did you make sure to learn and develop your theoretical insights amidst such a complex setting?

I have a two-part answer here. One relates to the researcher’s focus and the other to the characteristics of the technology. First, having a research question was helpful in navigating complexity. My primary question was about skill acquisition in the condition of open surgery versus in the condition of robotic surgery for formal apprentices. So, I tried to get data on that with each visit. Within a couple of months, I had noticed, wow, trying to learn robotic surgery via previous methods looks really counterproductive and most everyone seems fine trying anyway. Conversely, everyone seems to be complaining about this robot, but the open method is butchery by comparison – and I don’t say that lightly! So, the learning question became more and more compelling as I went on, and that helped me focus.

Second, there is a distinction within the class of intelligent technologies that I think is important. Ethnography of work involving robotics is, in a way, far easier than an ethnography of work involving “screens and servers” AI. First, the work proceeds in space and time resolutions that are amenable to human observation. A robot doesn’t move five orders of magnitude faster than a human, but a high frequency trading algorithm can. So, when the robot gets deployed into the work, you can compare it in a much more sort of “apples to apples” fashion with non-robotic equivalents. But more fundamentally, you can see how the work changes with your eyes, you can walk around it and see the work being performed in human-familiar time frames. My colleagues engaged with digital ethnography needed to work very hard at observation compared to what I had to. I discovered this only when I hit the job market, actually. People asked me, “Why robots?”, and my earlier answer on that was, “I am just compelled” – I had no theoretically salient answer to that question. But now I do.

5. What was your favorite part of fieldwork and what was the most difficult part of the fieldwork in these settings?

On a certain level my favorite part of field work has to do with building relationships and gaining access. Some of the most interesting data, to me, comes in the moments in which you are negotiating access or explaining what you are after. I try to get the IRB approvals done way in advance, so that I can record some of these very early conversations with people. Because you never get those quotes back! The things they say about what you are about to step into often are the most precise and compelling summation of the phenomena you are about to encounter. So I’ve found that powerful from a data perspective and an engaging and energizing challenge.

This challenge and energy in data access never really stops for me. For instance, on a given day towards the end of a procedure, say a person hints about a database managed by someone in the billing department that might be useful. At that moment, you have a choice: to say yes and ask that person about the data or say nothing due to the tiring day and the fear of asking. My job is always to be assertive, to make the answer yes. To me that’s a bit of a thrill and a challenge to overcome that fear. This speaks to the difficult part of the fieldwork. To just say, “Yes please, I would love to speak to them today, is there a way we can make that happen?” It may lead nowhere, but it may also help later. It’s full of fear, but it’s also part of what I enjoy. People often say, “Oh my God, how could you be in the OR and watch all this blood and gore during the surgery?”, and I say, it was a profound experience but that wasn’t that difficult by comparison. Difficult is overcoming the fear of trying to ask and impose in a helpful way over and over and over again.

6. How did you come up with the codes in your analysis? Phrases like, shadow learning and helicopter teaching convey very precise meaning, yet seem very hard to think of. How did the concepts emerge from the data?

I am rewriting a paper right now, where I could settle for a reasonably accurate yet non-interesting term, but I don’t like to walk that path. There is one person who stands out here as defining my standard and that’s Leslie Perlow. During my PhD, I was in a qualitative inductive seminar of hers that ran for a good number of years. She didn’t say it often, but she said it with great force of will: that you had better name what you’re theorizing very carefully. She gave two reasons: one is sort of career-oriented and practical. You could become known for that thing and if it’s wrong or doesn’t inspire you, you’ll become just slightly well known for something you don’t really like. And then you’ll have to develop it. Not a recipe for a satisfying first tack in your career.

The other reason had to do with craft and value. If we want our theories to actually matter in the world, they need to have an intuitive appeal, and yet disturbing and provocative in some way. If your terms are a mouthful or slightly off the mark, people will say “Oh yeah, I know what you’re talking about, it’s X,” while in fact you were talking about X’ or Y. Or they just won’t get used because they don’t inspire. For me this taps into the ethos of a copywriter — someone who writes movie titles or news headlines. So, with this paper in particular, I just decided not to settle until I had an entire ‘whole-body yes’ — not just an intellectual yes, but the kind where you can feel sensations throughout your body, when you just know something is right— to these kinds of names.

I would say, if I had a wish for our Organization Theory discipline or management scholars in general, it would be that we spend more time being precise in this way – for the sake of impact, for the sake of creating value in the world, and also for the sake of a job well done.

Part B: “Shadow” Interview

Prior to joining the academy, you worked in different industry settings like robotics and consulting. How do you bring skills and the experiences from the industry to academia and vice versa?

I will start with how my experience in consulting is related to my research. I joined a firm founded by one of Chris Argyris’s students. That work was based in action science (e.g., model 1 and model 2 learning in Argyris, 1976), the next logical extension of Douglas McGregor’s work, and in line with Kurt Lewin’s old adage, “If you want to understand something, try to change it”. So, I developed materials around, and worked very hard for years on the personal comportment and techniques required to engage with compassionate curiosity in the face of very intense negative interpersonal stuff. We tended to get hired to consult when a group of executives, for example, were destroying a company because they were avoiding a particular topic or talking about that topic in toxic ways. We would come in and help them interact more productively or do capacity building in a firm. The skills associated with this type of consulting work are very closely aligned with qualitative research. For example, you need to directly inquire into the things that don’t make sense with curiosity and compassion, and jointly design next steps with people. With that experience, qualitative research was a natural fit.

In terms of my career journey, I have had an abnormal relationship with academia. I transferred through 3 high schools, I almost failed out of one of them, and almost didn’t go to college. When I started college, about halfway through my first year, I decided to take an 8-month break with a friend and drive a car around the United States to visit all the national parks, not thinking I was going to come back to undergrad studies. I started as a math major, came back to a philosophy major – I started to read Greek philosophers on that trip and I realized, “Oh, this is a profession. I can go to school for this. So, okay: that’s my profession!” Then it took me 14 years between the end of undergrad and coming back to get my PhD. Later on, in the midst of my PhD, I took a 2-year break to go help found and fund a start-up. I decided that I would leave academia and go found this start-up because it was going to change the world. I like building things, I like running organizations, I like solving problems, not just studying them. Finally, the twist inside the twist is that in the mornings when I was trying to finish my dissertation, I discovered this is more fun, more energizing, and more challenging than working in the start-up. So, here I am, back in academia. I think I actually have found my home!

So it probably isn’t a surprise that I have a great deal of mixed feelings about our profession in general and our speciality in particular. Steve Barley’s piece in ASQ (Barley 2016) in which he reflects on theory building resonated here. He was critical about theory being the coin of our realm. I have major concerns about that too, as well as about the structure of our profession. It was in a way a great relief to leave academia because I didn’t want to perpetuate that system. Now, having come back, I know my job is to try and help from within.

So to the extent that I do anything that is different, it’s at least partly because I am of mixed mind about what I’m engaged in. On the one hand, I dearly love some of what I get to do. I am so grateful, and it is so much fun. I think it can add value. On the other hand, some other things that we do in our profession offend me. Specifically, given the problems that are going on in the world, for instance, I often ask “how am I helping the guy who I just saw this morning stocking the shelves in the grocery store?” That’s a problem that really is important to me.

References cited:

Argyris, C. (1976). Single-loop and double-loop models in research on decision making. Administrative Science Quarterly, 363-375.

Barley, S. R. (2016). 60th Anniversary Essay: Ruminations on How We Became a Mystery House and How We Might Get Out. Administrative Science Quarterly, 61(1), 1–8.

Bosk, C.S. (1979). Forgive and Remember: Managing Medical Failure. Chicago, IL: University of Chicago Press.

Mayur’s Bio: Mayur is a Ph.D. candidate in Information Systems at Western University’s Ivey Business School, Canada. His research examines the phenomenon of organizing for- and in- the digital age, with the current fieldwork focused on the changing nature of information production and decision making in the wake of big data analytics and AI. URL: https://www.research.manchester.ac.uk/portal/mayur.joshi.html

Danielle’s Bio: Danielle is a Ph.D. student in the Technology Management Program at UC Santa Barbara. Her current fieldwork explores how scientists and technicians collaborate in nanotechnology research. URL: https://tmp.ucsb.edu/about/people/danielle-bovenberg